BioByte 37: cameras to non-invasively measure neural activity, deep learning for spatial transcriptomics, whole body cellular mapping in mice, 100B-scale transformer for generative protein design

Welcome to Decoding Bio, a writing collective focused on the latest scientific advancements, news, and people building at the intersection of tech x bio. If you’d like to connect or collaborate, please shoot us a note here or chat with us on Twitter: @ameekapadia @ketanyerneni @morgancheatham @pablolubroth @patricksmalone. Happy decoding!

Sprinting to your next experiment? Spouse about to give birth? Boarding a flight and realizing that you have 1% phone battery left? Hope your short term memory is in working order:

An expose on cognizance about biosecurity and bioterrorism

Should biologists draw from the tech world and introduce scrum management?

An overview of diffusion models in generative chemistry for drug design

A deep learning model for spatial transcriptomics across tissue

A novel method for mapping whole-body cellular and structural maps in mice

A massive transformer for generative protein design

What we read

Blogs

Governments are waking up to biosecurity risks — but we must act fast [Cassidy Nelson, Financial Times, July 2023]

Cassidy Nelson, head of biosecurity policy at the Centre for Long-Term Resilience, a UK think-tank, draws attention to the issues at the intersection between AI and biotech. In fact, the former Google CEO Eric Schmidt describes AI-designed bioweapons as “a very near-term concern”.

Last month, the UK committed £1.5B in annual funding to counter biological threats. The Pentagon is concluding its Biodefense Posture Review, to assess how prepared the US is to deal with bioweapons and pandemics.

The author asks for more, however. We need to move fast across three fronts:

Develop LLM evaluations to determine capabilities and risks. Create chokepoints to limit access to dangerous tools.

Advance efforts to detect new pathogens rapidly through the development of a National Biosurveillance Network, that is linked to a global system that can sound the alarm on potential pandemics.

Bring nations together to focus on the converging risk of AI and biotechnology.

Biosecurity experts agree that the recent technological advancements have made our prospects darker. At the same time, with mRNA and rapid sequencing we have a chance to curtail these risks.

If you’re interested in learning more about biodefense, check out our deep dive.

Should Biologists Scrum? [Vega Shah, The Aliquot, 2023]

Vega explains how the often-used Scrum product management methodology used in tech could be used in the wet lab to improve productivity. LabScrum, which is a hybrid of traditional scrum used in software with best practices from research labs at the University of Oregon, showed heightened productivity and reduced stress in lab members.

So why does scrum work for labs?

It breaks down monumental tasks: large-scale, multi-year projects can lead to a cycle of perfectionism and procrastination. By having sprints, labs break down these huge projects into manageable steps allowing incremental progress and providing a sense of achievement at the same time.

It creates a safe space for imperfection: sprints offer researchers to discuss work-in-progress, rather than having to wait to show a perfect magnum opus which can lead to unproductive perfectionism

It embraces retrospectives: rather than solely focusing on what is going wrong with ongoing studies, retrospectives allow a space for “what went right, what went wrong and how to move forward”.

Diffusion Models in Generative Chemistry for Drug Design [Charlie Harris, June 2023]

This blog post covers various diffusion models in generative chemistry with a focus on drug design, and specificaly, designing new molecules with desired properties.

Diffusion models work by starting with a random molecule and then gradually adding noise to it. The noise is added in a way that is consistent with the statistical properties of real molecules. This process is repeated many times, and the final result is a distribution of molecules that is similar to the real distribution of molecules.

The article discusses three types of diffusion models:

Denoising diffusion probabilistic models (DDPMs): DDPMs are the most common type of diffusion model. They work by starting with a noise vector and then gradually adding structure to it. The structure is added in a way that is consistent with the statistical properties of real molecules.

Noise-conditioned score networks (NCSNs): NCSNs are a type of diffusion model that uses a neural network to score the likelihood of a molecule. The neural network is trained on a dataset of real molecules, and it learns to identify the features that make a molecule likely or unlikely. This allows NCSNs to generate molecules that are more likely to be real.

Stochastic differential equations (SDEs): SDEs are a type of diffusion model that uses a mathematical model to describe the evolution of molecules over time. The model is based on the idea that molecules are constantly moving and interacting with each other. This allows SDEs to generate molecules that are more realistic than those generated by other diffusion models.

The development of generative models in chemistry is still in its early stages, but the potential benefits are significant for drug discovery. Check out some of the papers in the References section of the blog to learn more about the various models.

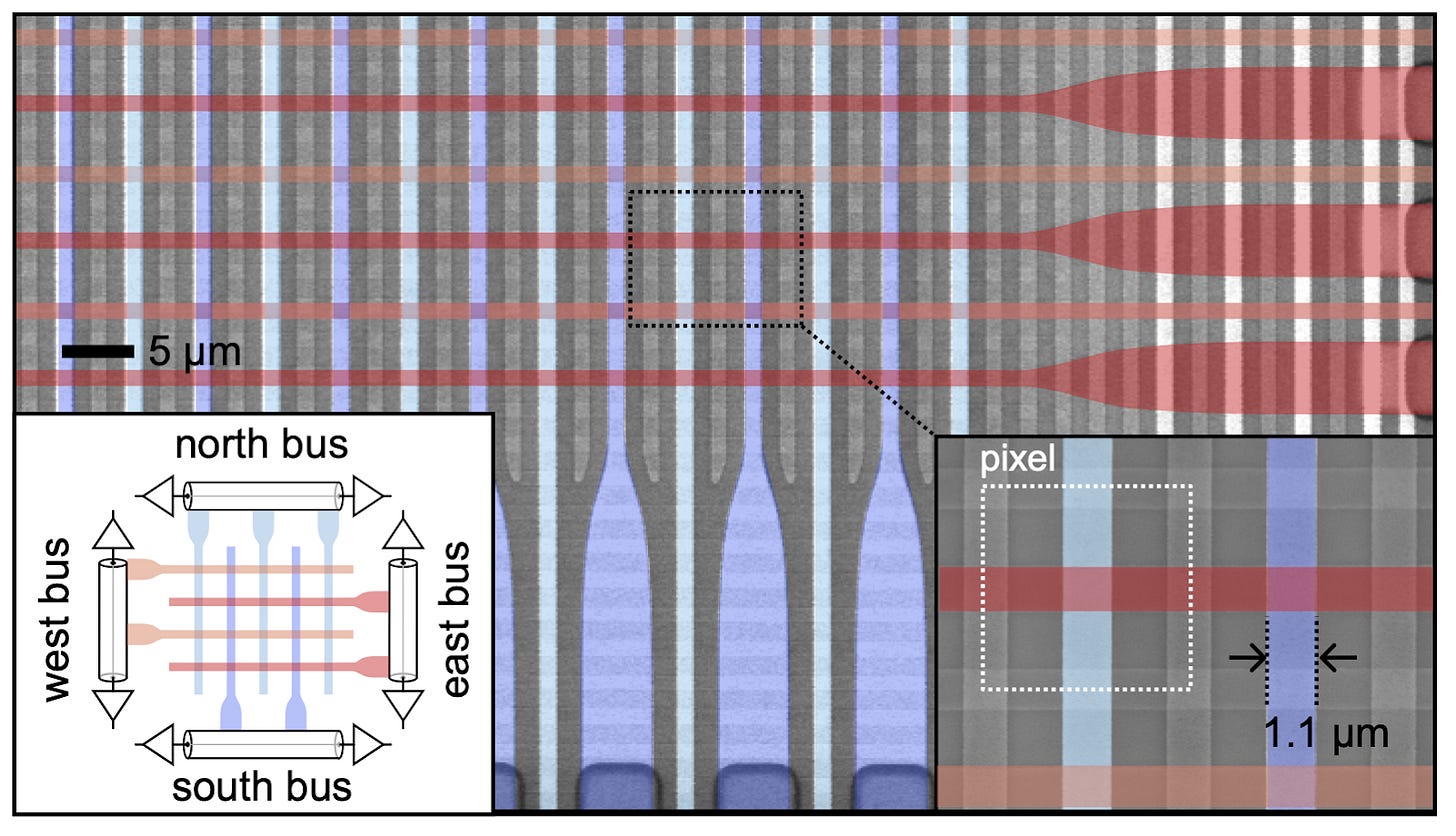

At Last, Single-Photon Cameras Could Peer Into Your Brain [Genkina, IEEE Spectrum, July 2023]

A team at the National Institute of Standards and Technology (NIST) has created a miniature single-photon camera capable of measuring light at high sensitivity and speed. One of the more interesting applications is the non-invasive measurement of neural activity. The technology works by using superconducting nanowires which detect photos when a high-enough electrical current runs through the wire to destroy its superconducting properties. Each pixel of the camera is a superconducting wire with a current set just below the threshold, so that a single photon colliding with the wire will break its superconductivity. Compared to other non-invasive optical technologies for neural recording, this hardware has higher signal quality and sensitivity and therefore requires less signal processing. Scientists such as Roarke Horstmeyer at Duke are now trying to translate the technology out of the lab for real-world applications such as portable MRI.

Scanning electron micrograph of a superconducting-nanowire single-photon camera.

Academic papers

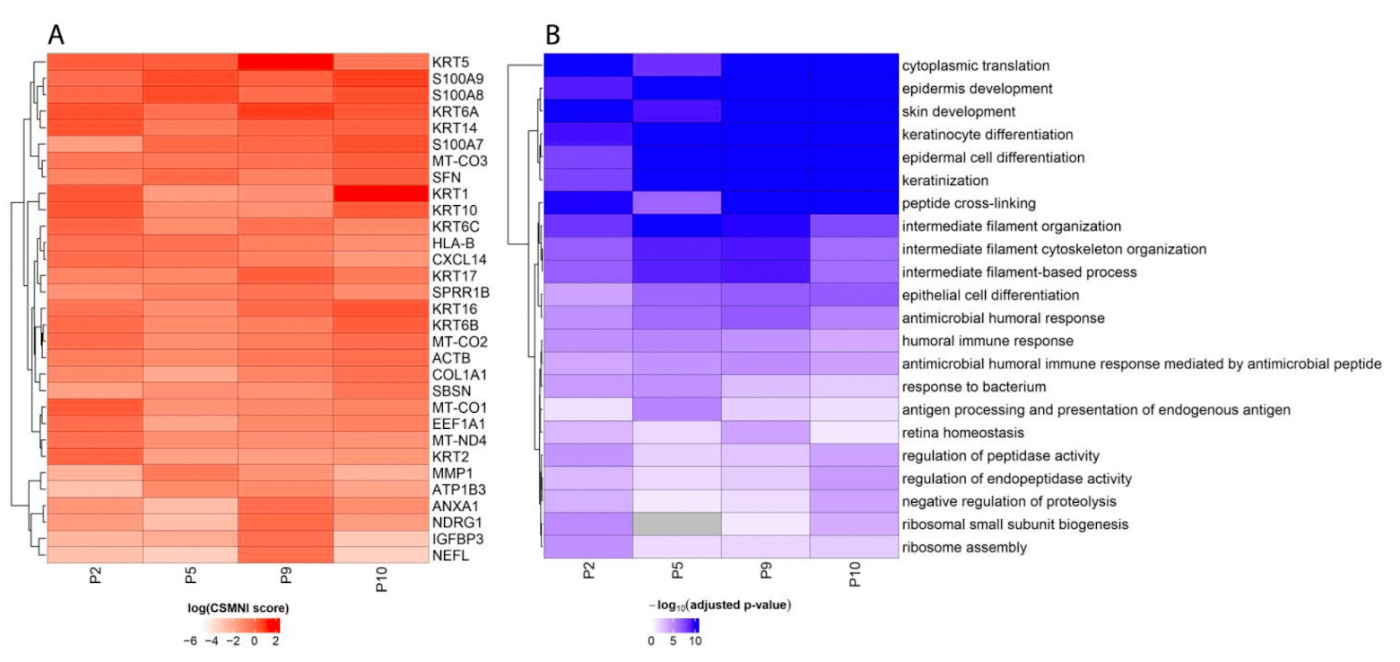

SPIN-AI: A Deep Learning Model That Identifies Spatially Predictive Genes [Meng-Lin et al, Biomolecules, July 2023]

Why it matters: Spatial transcriptomics is a powerful technique that allows scientists to study how gene expression varies across different regions of a tissue. This information can be used to understand how cells interact with each other and how tissues develop and function. However, spatial transcriptomics data can be difficult to interpret, and there is no easy way to identify the genes that are most important for determining spatial cellular organization.A team of researchers from the University of California, Berkeley, have developed a new deep learning model called SPIN-AI that can identify spatially predictive genes (SPGs). SPGs are genes that are expressed in a specific spatial pattern and that are therefore likely to play an important role in governing cellular organization. SPIN-AI was trained on a dataset of spatial transcriptomics data from the mouse brain, and it was able to identify a large number of SPGs. The researchers found that SPGs were enriched for genes involved in cell signaling, transcription, and metabolism:

The development of SPIN-AI is a significant advance in the field of spatial transcriptomics. SPIN-AI can be used to identify SPGs in any tissue, and this information can be used to understand how cells interact with each other and how tissues develop and function.

Check out this blog post and the original paper for more on this work!

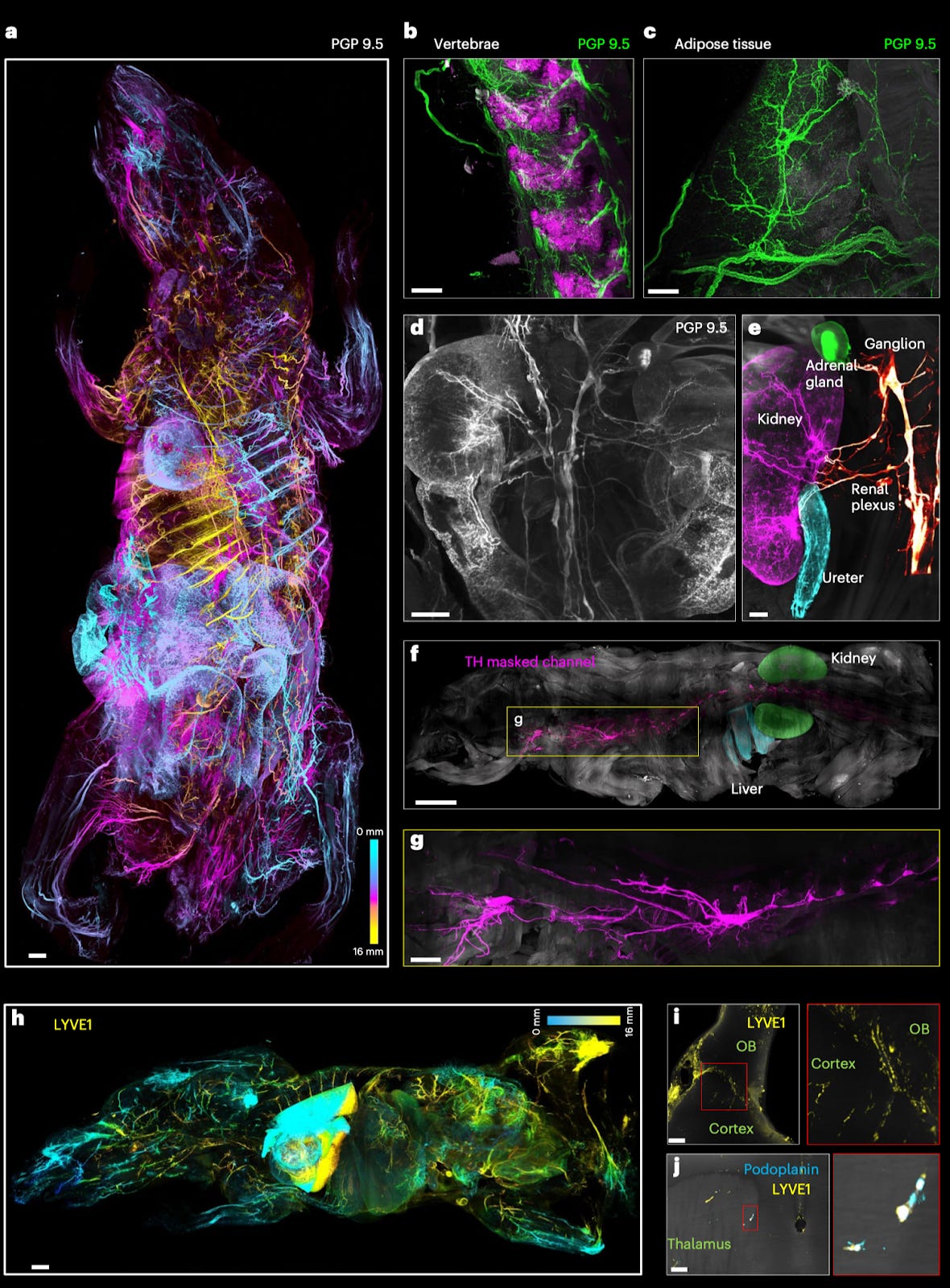

Whole-body cellular mapping in mouse using standard IgG antibodies [Mai et al., Nature Biotechnology, 2023]

Why it matters: for years, interrogating systems biology has been impeded by inherent physical characteristics of biological tissue. Although several technologies have enabled the optical clearing of human organs and model organisms (including whole rodents), adequately labeling cells and varying structures of interest has remained a challenge. In this paper, Mai et al develop a technique, wildDISCO (wild three-dimensional imaging of solvent-cleared organs) for using any off-the-shelf antibodies to immunolabel mice as a whole. This method enables a budget-friendly and powerful means to develop cellular and structural maps in disease states, which may unearth new mechanistic understandings of pathophysiology.The authors had previously shown that high pressure-driven permeabilization chemistry enables staining/clearing of whole mouse bodies, but only with nanobodies. They hypothesized that a barrier to antibody penetration may be a function of incomplete extraction of membrane cholesterol, and thus tested several analogs of cholesterol binders. They found that heptakis(2,6-di-O-methyl)-β-cyclodextrin significantly reduced antibody aggregation in mouse tissue and facilitated uniform staining of entire mouse bodies using a wide range of antibodies across all tissue types. Using wildDISCO, the authors were able to generate large maps of structures – including the vasculature, immune cells, lymphatic system and more.

xTrimoPGLM: Unified 100B-Scale Pre-trained Transformer for Deciphering the Language of Protein [Chen et al., BioRxiv, July 2023]

Why it matters: The largest protein language model (100 billion parameters) ever trained achieved state-of-the-art performance on 13 of 15 protein-related tasks across a range of domains including protein structure, developability (a key unmet need in antibody engineering), and function.

A group from BioMap Research published a 100 billion parameter LLM for protein design, the largest protein language model ever trained. The compute for this thing is insane - over 1 trillion tokens processed on a cluster of 96 NVIDIA A100s. The model took ~6 months to train, and likely cost millions of dollars. An important innovation in the paper was a novel pre-training task for learning biological information from protein sequences. A novel combination of pre-training tasks was combined into a unified framework: a traditional masked language model that predicts the next amino acid in a protein sequence, and a general language model that predicts large spans of amino acid sequences. This combo more comprehensively characterized the underlying distributions of the protein sequences. The results were impressive. xTrimoPGLM surpassed state-of-the-art performance on 13 of 15 protein-related tasks across a range of domains including protein structure, developability (a key unmet need in antibody engineering), and function. While impressive, it is important to note that these pre-training methods were still inspired by natural language. It will be exciting to see what bio-specific pre-training methods might be developed by the computational biology field.

What we listened to

Notable Deals

BeiGene further invests in ADCs with solid tumor partner DualityBio, a BioNTech collaborator

Thermo Fisher to acquire data intelligence company CorEvitas for $900M+

Tessa Therapeutics dissolves after raising more than $200M

Astellas eyes rare ophthalmology, licensing 4DMT's viral vector for gene therapy for up to $962M

In case you missed it

Decoding Bio Snapshot 2023: The Next Generation of Bio Companies

A Startup’s Guide to Buying Companies by Travis May, Former CEO of Datavant

Rational design and combinatorial chemistry of ionizable lipids for RNA delivery [Xu et al., Journal of Materials Chemistry, 2023]

LNPs have become increasingly important in the space of genetic medicine. In this review, Xu et al. delve into the cutting-edge developments of ionizable lipids and underscore the potential of incorporating combinatorial chemistry techniques into the design and synthesis of new ionizable lipids.

What we liked on Twitter

Best R&D leaders in biotech @varma_ashwin97

Running list of biomedical language models @katieelink

Gene editing landscape @andrewpannu

Events

Bits in Bio x IndieBio NY: Bio & ML Across Modalities - July 12

Field Trip

Did we miss anything? Would you like to contribute to Decoding Bio by writing a guest post? Drop us a note here or chat with us on Twitter: @ameekapadia @ketanyerneni @morgancheatham @pablolubroth @patricksmalone